Google today unveiled Gemini, its most powerful generative AI (genAI) software model to date — and it comes in three different sizes so it can be used in everything from data centers to mobile devices.

Google has been developing the Gemini large language model (LLM) over the past eight months and recently gave a small group of companies access to an early version.

The conversational, genAI tool is by far Google’s most powerful, according to the company, and it could be a serious challenger to other LLMs such as Meta’s Llama 2 and OpenAI’s GPT-4.

“This new era of models represents one of the biggest science and engineering efforts we’ve undertaken as a company,” Google CEO Sundar Pichai wrote in a blog post.

The new LLM is capable of multiple methods of input, such as photos, audio, and video, or what’s known as a multimodal model. The standard approach to creating multimodal models typically involved training separate components for different modalities and then stitching them together.

“These models can sometimes be good at performing certain tasks, like describing images, but struggle with more conceptual and complex reasoning,” Pichai said. “We designed Gemini to be natively multimodal, pre-trained from the start on different modalities. Then we fine-tuned it with additional multimodal data to further refine its effectiveness.”

Gemini 1.0 will come in three different sizes:

Gemini Ultra — the largest “and most capable” model for highly complex tasks.

Gemini Pro — the model “best suited” for scaling across a wide range of tasks.

Gemini Nano — a version created for on-device tasks.

Google

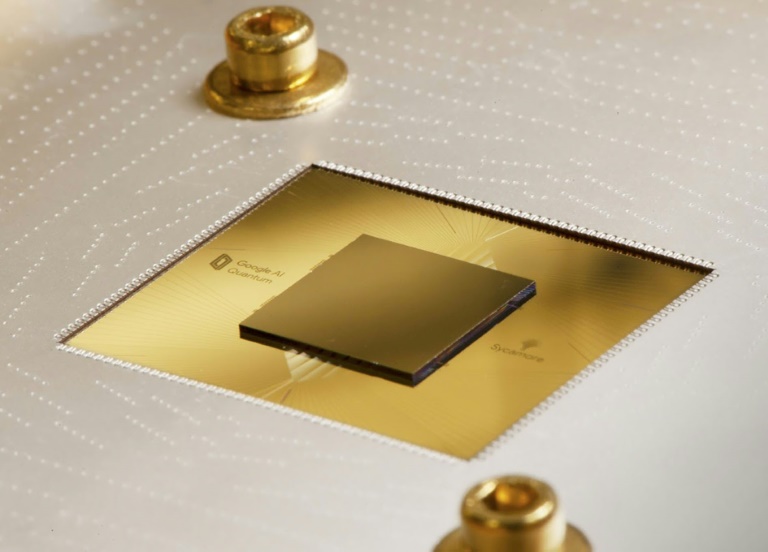

In conjunction with the launch, Google also introduced its most powerful ASIC chip — the Cloud TPU v5p — specifically designed to handle the massive processing demands of AI. The new chip can train LLMs 2.8 times faster than Google’s previous TPU v4, the company said.

LLMs are the algorithmic platforms for generative AI chatbots, such as Bard and ChatGPT.

Earlier this year, Google announced the general availability of Cloud TPU v5e, which boasted 2.3 times the price performance over the previous generation TPU v4. While far faster, the TPU v5p also represents a price point three-and-a-half times that of v4.

Google’s new Gemini LLM is immediately available in some of Google’s core products. For example, the Bard chatbot is using a version of Gemini Pro for more advanced reasoning, planning, and understanding.

The Pixel 8 Pro is the first smartphone engineered for Gemini Nano, using it in features like Summarize in Recorder and Smart Reply in Gboard. “And we’re already starting to experiment with Gemini in Search, where it’s making our Search Generative Experience (SGE) faster,” Google said. “Early next year, we’ll bring Gemini Ultra to a new Bard Advanced experience. And in the coming months, Gemini will power features in more of our products and services like…

2023-12-12 03:00:04

Source from www.computerworld.com